Model Deprecation Is the Real Vendor Lock-In

I shipped a production AI system in January. The model it ran on was deprecated by March. Not replaced with a better version. Deprecated. Removed from the API entirely.

OpenAI's 3-6 month deprecation windows are now the floor. Gemini 3 Pro went down. Anthropic deprecated older Claude versions. Everyone said "oh, just upgrade to the new model." But in production, that's not a free operation. It's not just a code change.

The Technical Problem

The technical problem is easy to state: your validation was against gpt-4-turbo-2025-11-15. Now that model doesn't exist. gpt-4-turbo-2026-02-15 is officially the model. The behavior changed subtly. Cost changed. Latency changed. Your benchmarks are invalidated.

The Organizational Problem

But the real problem is organizational. You have to re-validate the new model against production workload. You have to test it on the specific edge cases that matter to your customers. You have to convince your team it's safe to push. That takes weeks, not days.

And while you're validating, the old model is gone. You can't roll back to the known-good version. You're stuck. Either deploy the new model and hope, or keep running on an unsupported version and hope the API doesn't actually shut it down tomorrow.

The Real Lock-In

This is the real vendor lock-in. Not switching costs. Not API contracts. It's that the ground beneath your feet keeps moving, and moving with it is expensive. The model you chose isn't stable. Every quarter it gets replaced, and you have to keep up or fall behind.

The industry talks about "model risk" in terms of hallucination and bias. The actual production risk? The model you validated, tested, and tuned your prompts for simply stops existing. I learned to build abstraction layers that treat models as swappable components, not because I was being clever, but because I got burned.

What Actually Works

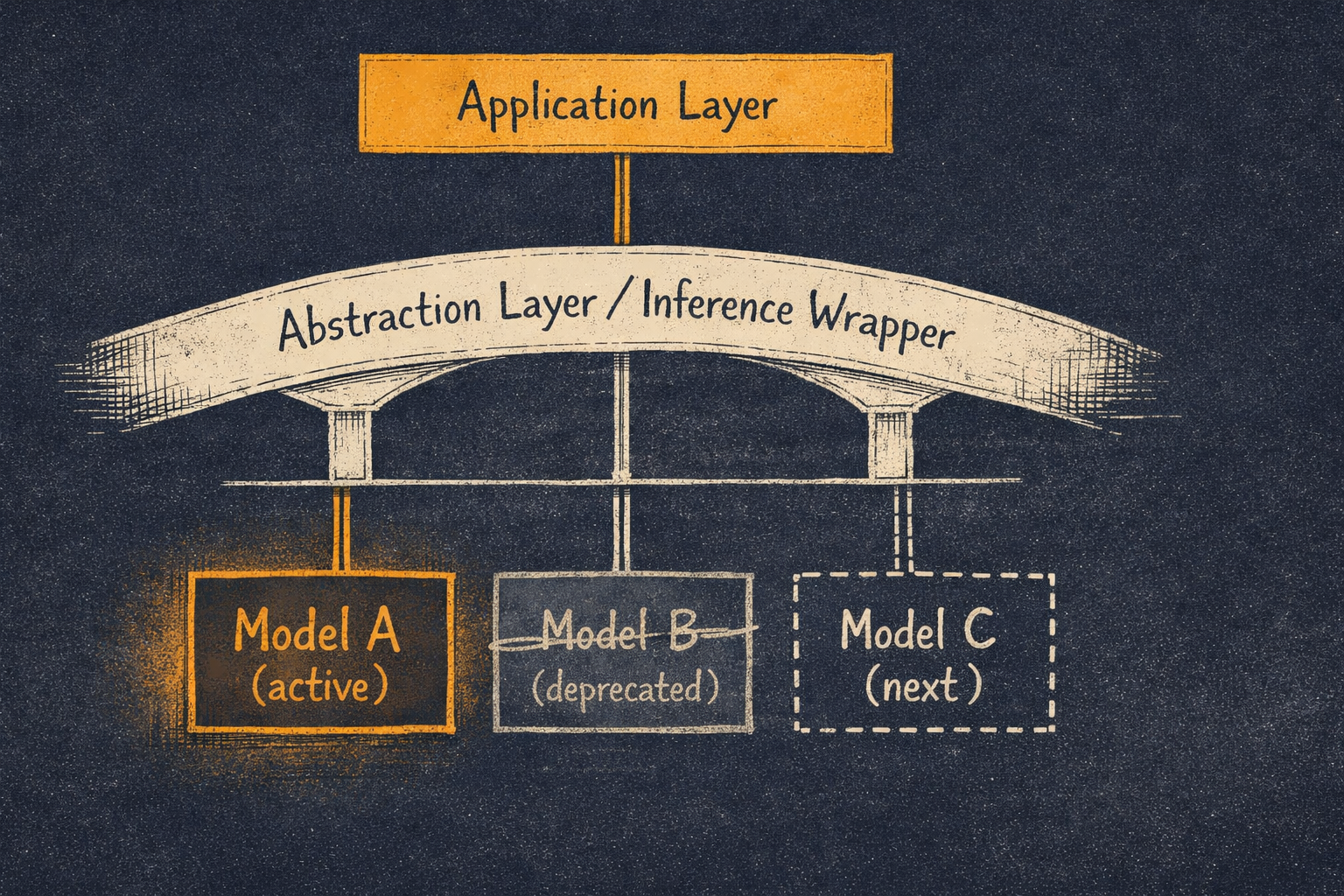

Build an abstraction layer between your application and the model provider. Your app talks to an interface, not directly to gpt-4-turbo-2025-11-15. When deprecation happens, you update one place, validate once, and deploy.

The pattern: use a model-agnostic wrapper. Provider A breaks something, you can swap providers as a test. Benchmark new models in the wrapper before committing. Keep the newest version and a fallback version running in parallel for a few weeks during transition.

This isn't theoretical. I spent January validating against one model, March validating against another. The abstraction layer meant I could run both in parallel, measure actual behavior differences, and swap on a timeline that made sense for the business.

The Takeaway

With deprecation cycles shortening from years to months, every team shipping on a specific model version is accumulating technical debt they don't know they have. Model deprecation cycles are facts now. You can't fight them. You can only build your infrastructure to absorb them cheaply. Treat models as swappable. Build once. Validate systematically. Deploy confidently.